AI in Healthcare: When Machines Make Life-and-Death Decisions

There is a quiet revolution happening in hospitals and diagnostic centres. Artificial intelligence is reading scans, predicting heart attacks, and even suggesting treatment plans—sometimes before a human doctor reviews the case. For families navigating complex health decisions, this technology sounds like a miracle. But when the diagnosis involves a loved one’s life, a sobering question emerges: Should a machine have that much power?

The reality, as with most healthcare matters, is layered. AI in healthcare is neither the fearless saviour nor the cold, error-prone villain. Understanding where this technology excels—and where it dangerously falters—is essential for anyone making informed medical choices today.

How AI in Healthcare Actually Works Today

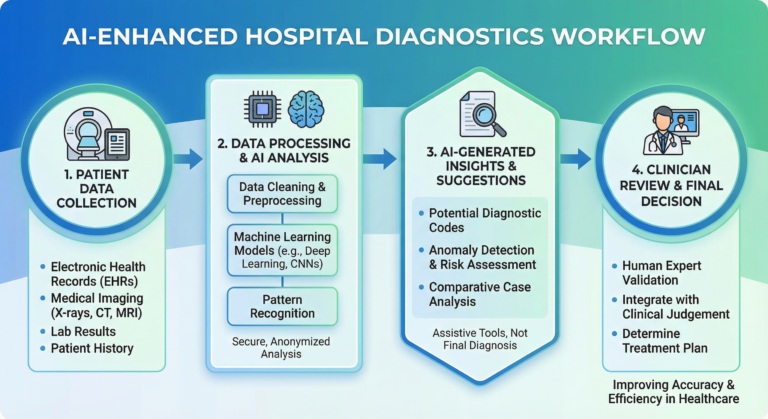

Before exploring the risks, it helps to understand what AI in healthcare actually does in current clinical settings.

At its core, medical AI refers to algorithms trained on vast datasets—millions of X-rays, pathology slides, patient records, or genetic sequences. These systems learn to recognise patterns that correlate with specific diseases or outcomes. When presented with new patient data, they generate predictions, flag anomalies, or suggest probable diagnoses.

Common applications include:

- Radiology assistance: AI tools analyse CT scans, MRIs, and mammograms to detect tumours, fractures, or early-stage cancers.

- Pathology screening: Algorithms review tissue samples for cellular abnormalities.

- Predictive analytics: Systems assess patient vitals and history to predict deterioration, sepsis risk, or readmission likelihood.

- Drug discovery: Machine learning accelerates the identification of promising molecular compounds.

However, it is critical to note that most AI tools currently function as decision-support systems—not autonomous decision-makers. A radiologist still reviews the flagged scan. A physician still prescribes the treatment. The machine advises; the human decides.

At least, that is the ideal.

The Promises: What AI Does Well in Medicine

Dismissing AI in healthcare outright would be intellectually dishonest. When deployed responsibly, these systems offer genuine advantages.

Speed and Scale

An experienced radiologist might review 50–100 scans per day. An AI system can analyse thousands in the same timeframe, flagging urgent cases for priority human review. In overburdened healthcare systems with long waiting times, this speed translates to faster diagnoses.

Pattern Recognition

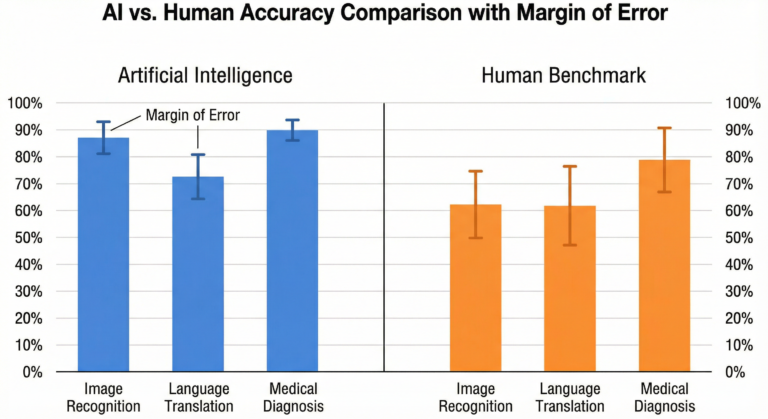

Certain AI models have demonstrated impressive accuracy in detecting early-stage diabetic retinopathy, some skin cancers, and specific lung nodules. Research published in peer-reviewed journals has shown that, for narrowly defined tasks, well-trained algorithms can match—or occasionally exceed—average specialist performance.

Reducing Human Error

Fatigued doctors make mistakes. Cognitive biases affect clinical judgment. AI, theoretically, offers consistency—it does not tire, rush, or have a bad day.

Accessibility

In under-resourced settings where specialist doctors are scarce, AI-assisted diagnostics can provide preliminary screening that might otherwise be unavailable.

Yet, every promise comes with conditions. And the conditions, in healthcare, can mean life or death.

The Risks: When Machines Get It Wrong

No technology is infallible, but errors in healthcare carry consequences far graver than a faulty product recommendation or a mistranslated sentence.

Data Bias

AI learns from the data it is fed. If training datasets predominantly represent one demographic—age group, gender, or ethnicity—the algorithm may perform poorly for others. Studies have documented how certain dermatology AI tools underperform on darker skin tones, or how cardiovascular risk algorithms have historically underestimated danger for specific populations.

For urban families with diverse health profiles, this is not a theoretical concern. An algorithm that “works on average” may not work for your specific case.

Black Box Problem

Many sophisticated AI models—especially deep learning systems—cannot clearly explain why they reached a conclusion. They output a probability, not a rationale. When a machine flags a lesion as malignant, the physician may not understand the underlying reasoning. This opacity complicates clinical judgment and informed consent.

Over-Reliance and Automation Bias

There is documented evidence that when humans and machines collaborate, humans sometimes defer excessively to the algorithm—even when their own instincts suggest otherwise. A doctor might override their clinical suspicion because “the AI said it’s fine.” This phenomenon, known as automation bias, can be dangerous.

Accountability Gaps

If an AI-assisted diagnosis is wrong and a patient suffers, who is responsible? The software developer? The hospital that purchased it? The doctor who accepted the recommendation? Current legal and ethical frameworks have not fully resolved these questions.

AI vs Human Doctors: A Balanced Comparison

Factor | AI Systems | Human Physicians |

Speed | Extremely fast; can process thousands of cases | Limited by time, workload, and fatigue |

Consistency | Does not tire or have off-days | Subject to fatigue, cognitive bias |

Contextual Understanding | Limited; relies on structured data input | Can incorporate subtle patient history, emotional cues, family context |

Adaptability | Struggles with novel, rare, or unusual presentations | Can reason through atypical cases |

Explainability | Often opaque (“black box”) | Can articulate clinical reasoning |

Empathy & Communication | Absent | Essential for patient trust and compliance |

Accountability | Legally unclear | Clearly defined professional responsibility |

The ideal, most experts agree, is not AI replacing physicians but AI augmenting physician capabilities—each compensating for the other’s weaknesses.

Myths vs Facts About AI in Healthcare

Myth 1: AI will replace doctors within a decade.

Fact: While AI excels at narrow, well-defined tasks, medicine involves holistic judgment, ethical reasoning, and interpersonal trust. Regulatory, liability, and practical hurdles make full replacement extremely unlikely in the foreseeable future.

Myth 2: AI diagnoses are always objective and unbiased.

Fact: AI inherits the biases present in its training data. Objectivity depends entirely on dataset quality and diversity—something that remains inconsistent across many commercial tools.

Myth 3: If a hospital uses AI, the quality of care is automatically better.

Fact: AI is a tool. Its value depends on implementation, oversight, and integration with clinical workflows. Poorly deployed AI can create confusion, alert fatigue, or misplaced confidence.

Myth 4: Patients have no say in whether AI is used in their care.

Fact: Informed consent principles still apply. Patients have the right to ask how diagnostic decisions are made and whether algorithmic tools are involved.

What Experts Say About AI in Medical Decisions

The World Health Organization (WHO), in its 2021 guidance on the ethics and governance of artificial intelligence for health, emphasised that AI systems must prioritise safety, transparency, and accountability. The WHO explicitly cautioned against deploying AI tools that have not been validated across diverse populations or that lack explainability.

Similarly, research published in Nature Medicine and indexed on PubMed has repeatedly highlighted the “implementation gap”—where AI performs excellently in controlled research settings but inconsistently in real-world clinical environments.

Healthcare professionals interviewed by major medical journals consistently echo a theme: AI should enhance, not replace, human judgment. The physician’s role evolves from sole decision-maker to informed interpreter of algorithmic suggestions.

Dr. Eric Topol, a prominent cardiologist and digital medicine researcher, has written extensively about “deep medicine”—the idea that AI, by handling routine pattern recognition, could free physicians to spend more meaningful time with patients. But he, too, warns against uncritical adoption.

Practical Takeaways for Patients and Families

For health-conscious families navigating an increasingly automated medical landscape, here are grounded, realistic steps:

- Ask Questions

Do not hesitate to ask your physician: “Was any AI or algorithmic tool used in my diagnosis or treatment recommendation?” You have every right to understand the process.

- Seek Second Opinions

Especially for serious diagnoses, a second human opinion remains invaluable. AI-assisted or not, critical decisions benefit from multiple perspectives.

- Understand the Limits

AI excels at pattern recognition in common conditions. For rare diseases, unusual presentations, or complex multi-system illnesses, human expertise remains indispensable.

- Prioritise Transparent Providers

Hospitals and diagnostic centres that openly discuss their use of AI—including its limitations—demonstrate a commitment to ethical practice.

- Stay Informed, Not Fearful

AI in healthcare is evolving rapidly. Staying updated through credible sources (not sensational headlines) empowers better decision-making.

The Bottom Line

AI in healthcare is neither a perfect solution nor an existential threat. It is a powerful, imperfect tool—capable of remarkable assistance when thoughtfully deployed, and capable of harm when blindly trusted.

For families making critical health decisions, the safest approach remains a balance: leveraging technological advancements while insisting on human oversight, transparency, and the irreplaceable value of a physician who listens, questions, and cares.

Machines can process data faster than any human. But medicine, at its core, is not merely data processing. It is judgment, context, empathy, and trust. Those remain, for now, distinctly human domains.

External Authoritative References

- World Health Organization (WHO): Ethics and Governance of Artificial Intelligence for Health (2021) – https://www.who.int/publications/i/item/9789240029200

- PubMed / Nature Medicine: Research on AI performance gaps in clinical implementation – https://pubmed.ncbi.nlm.nih.gov/ (Search: “artificial intelligence clinical implementation healthcare”)